Building a Sustainable Rolling Survey Practice

I’ve worked on or built a few rolling quantitative research efforts, and I have some thoughts on what makes the practice sustainable. Personally, recurring surveys become much easier to deploy if you’ve done the hard work upfront.

A sustainable survey practice is about building something you can reuse. More importantly, it should be centered around getting consistent, comparable data over time, without having to start from scratch every cycle.

Before setting anything up, it’s worth asking yourself or your team:

What are we actually trying to sustain?

What are the core metrics we care about?

(What’s our North Star, and how does it show value?)What trends do we want to track over time? What insights do we want to gain?

(QoQ, YoY, product changes?)How often can we realistically deploy this?

Can we reliably reach the audience we want?

What budget supports this as an ongoing effort?

What are our data retention constraints?

(Because this will impact whether you can make comparative evaluations.)

The reason these questions need to be answered is because it will determine if setting up a rolling survey practice is needed. If your team wants to track change over time, consistency in the questions matters. If the team frequently changes direction, the upfront work of programming this may not be worth it.

When your survey is well-defined, both in terms of questions and metrics, it makes everything downstream easier because analysis becomes repeatable, comparisons are easier to make, and you (or your team) spend less time trying to Sherlock Holmes past data or survey logic.

Before the first deployment, treat the survey like a product

Before launching, run a few cognitive interviews. This helps you and your team understand if:

Questions are being interpreted the way you intend

Any questions are unclear, confusing, or repetitive

A way I’ve run cognitive interviews is to ask participants to:

Read the question out loud and provide an answer based on what they’ve read

Interpret the question and terms in their own words, and explain why they chose their answer

Share what felt confusing and what additional detail would make it easier to answer

Also, pay attention when they pause or hesitate while reading. This will help you know if you need to revise your question or whether your question is easy to understand.

A simple and low-cost way to do a cognitive test is to recruit colleagues outside your team.

The unglamorous setup work matters

Most of the effort in rolling research is front-loaded and easy to underestimate. The initial setup reduces future friction because you spend less time decoding past survey data or trying to understand previous survey logic.

If you’re using tools like Qualtrics:

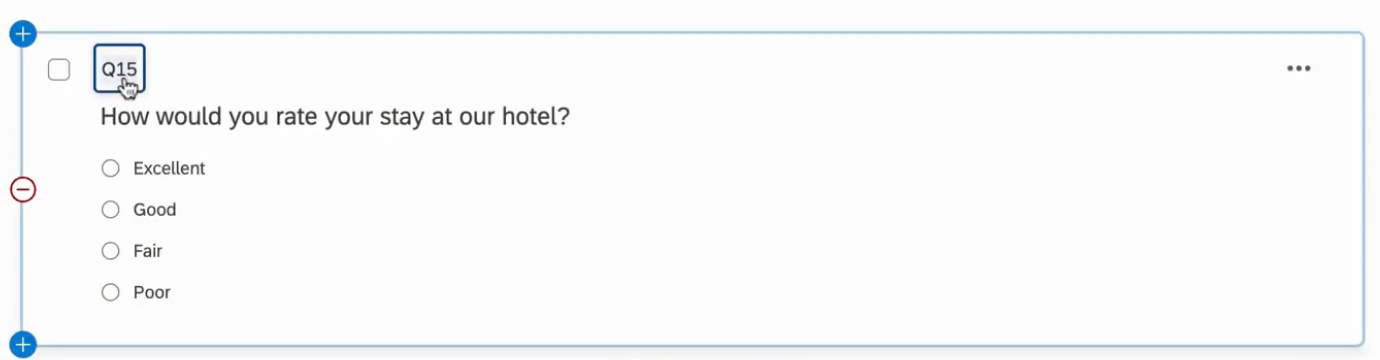

Assign consistent question names (ref image below). Instead of “Q1,” use something like “engage.” This ensures that when you download your data, it’s clearly labeled and easier to work with in your statistical software (hint hint: glimpse() in R).

Build a data dictionary to streamline analysis, you can export this from Qualtrics. This is particularly important if you export your data as values rather than labels.

Save a reusable template in your team’s library. This ensures question names, logic and embedded data stay consistent, and all you need to do is duplicate and deploy. Doing this also reduces any friction needed to keep your survey rolling.

Because your questions and naming conventions are consistent, you only need to write your code once. Save it somewhere accessible, it becomes reusable for each QoQ or YoY deployment.

(NOTE: You can also do this with a very manual software like Google Sheets or Excel, where you create a dashboard that can track charts and trends. But again, requires A LOT of manual work setting it up. It took me around 1.5 weeks of work.)

Question naming that will show in the first column when you extract your data.

Track longitudinal changes by creating a log

I know that it sounds like a rolling survey practice feels inflexible BUT it’s natural for a team to evolve over time. I only put forth that caution because some consistency is important to help you identify trends or shifts (hence, the North Star metric question).

If you have If your team’s need does evolve, my suggestion is to keep a log of what has changed, what has been added, removed, or updated. This helps you and your team understand which QoQ or YoY comparisons can (and can’t) be made, or identify change in your respondents behavior because of the way your survey is constructed.